Challenge

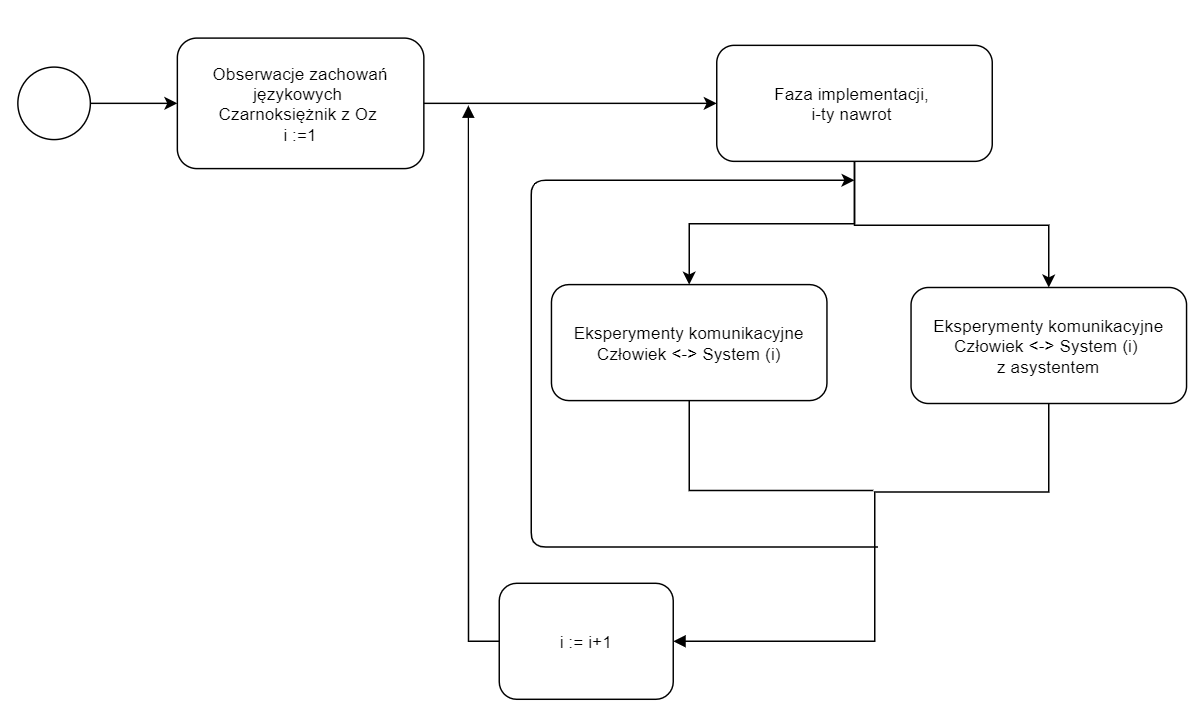

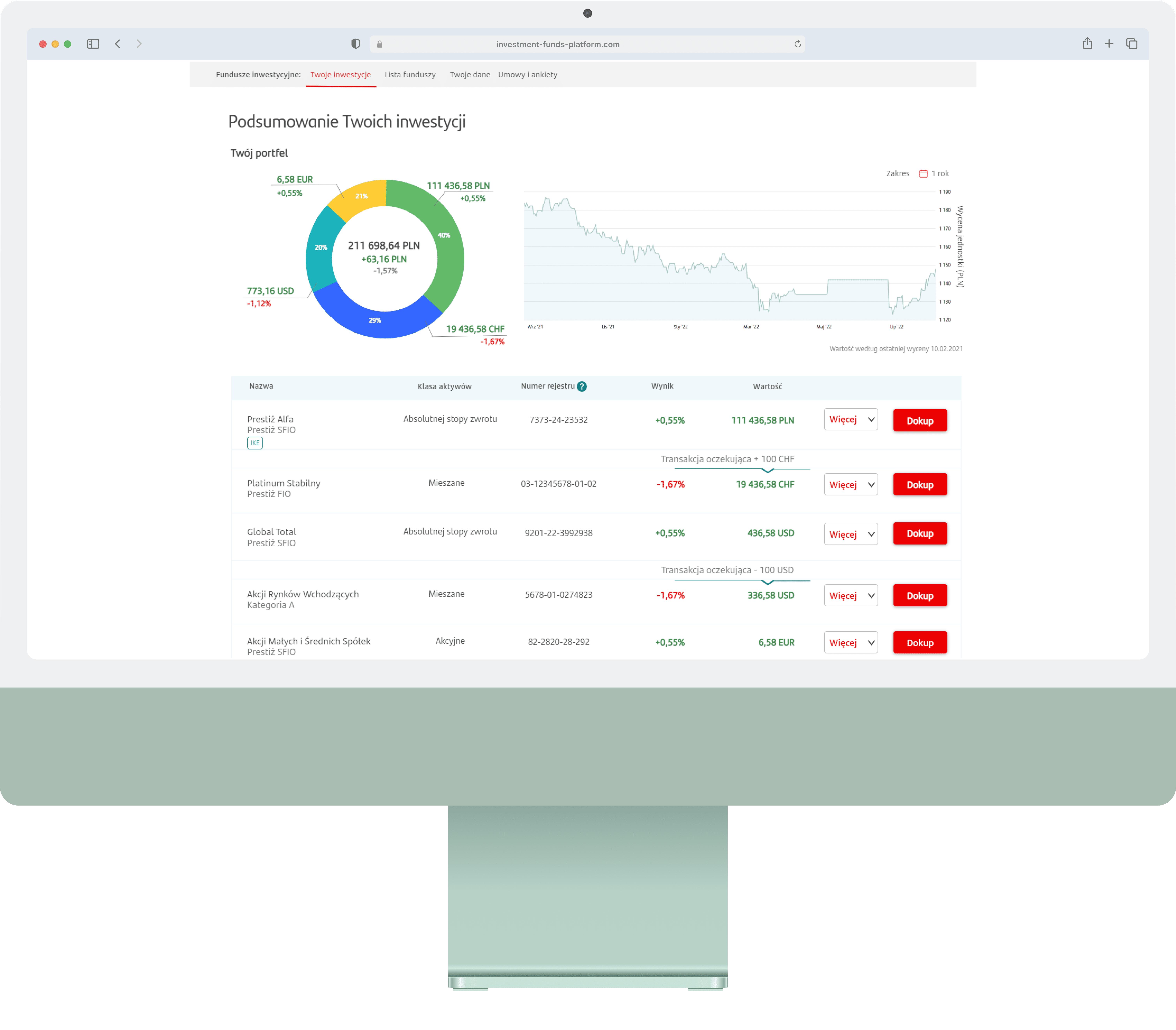

NLP-powered components are embedded in almost every product we use - but standard UX methods were designed for visual interfaces, not systems whose core interaction happens through language. How do people actually behave when interacting with linguistic interface components?

Role

Sole Researcher & Designer - designed and ran the full study: survey creation, participant recruitment, usability test facilitation, Wizard of Oz sessions, and data analysis.

UX Research

Usability Testing

Survey Design

Data Analysis

Impact

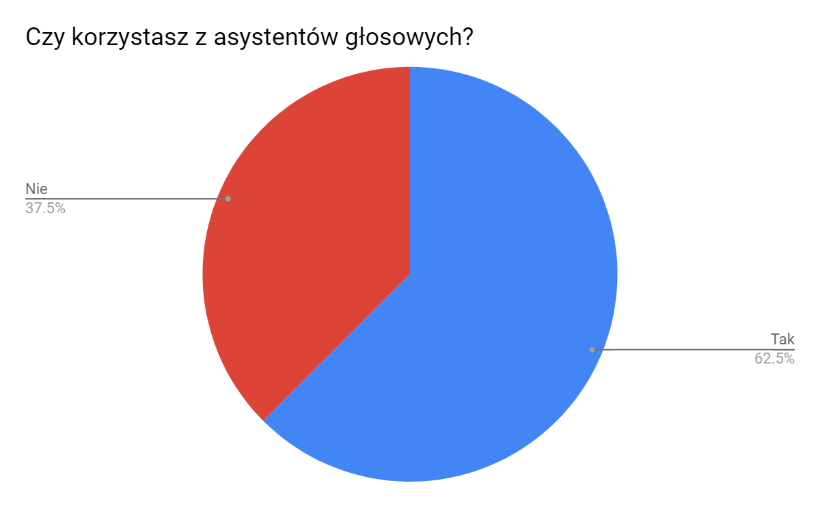

5 actionable findings and 4 design principles for linguistic interface UX, validated across 3 live systems with 88 survey respondents and 19 usability test participants.

Duration

6 months